In 1946, a twenty-year-old Soviet naval engineer named Genrich Altshuller started a new job at a patent office in Baku, Azerbaijan.

He reviewed invention proposals, refined documentation, prepared patent applications.

Most people would have treated it as paperwork.

But Altshuller treated it as a dataset.

He wanted to know: do successful inventions follow patterns?

Over the next several years, he analyzed more than 200,000 patents. He studied the problems these inventions solved, the contradictions they resolved, the strategies their inventors used. And he found something that would change how a part of the engineering world thinks about innovation: the best inventors kept using the same problem-solving patterns, across industries and decades, often without realizing it.

He called the resulting framework TRIZ, a Russian acronym for "Theory of Inventive Problem Solving."

That was nearly eighty years ago.

We've spent the last eight years building a company with it.

Why we're writing this

Every few years (a decade or so), a new innovation methodology shows up with fanfare.

Design Thinking. Lean. Agile. Jobs-to-be-Done.

Each wave brings books, certifications, conferences, and a predictable public arc: excitement, mass adoption, then a new framework replaces the old one, and the cycle restarts.

We think there's something worth looking at in the contrast between a methodology grounded in eighty years of patent analysis.

We're not trashing other frameworks.

Several of them are useful, and many of our customers use them alongside what we do. Hell, I'm writing this right now with "Jobs to be Done", "Good to Great", "7 Habits of Highly Effective People" staring me in the face.

But the R&D professionals we work with already know about TRIZ.

They encountered it in grad school or in a workshop somewhere.

They understand the logic, yet, very few use it day to day.

That gap between knowing and doing is what this piece is about.

What TRIZ actually is (for those who half-remember)

TRIZ starts with a plain observation: when you try to improve one aspect of a technical system, something else usually gets worse.

Make a structure lighter, it becomes more fragile.

Reduce cost, you might compromise durability.

Increase speed, you lose precision.

Altshuller called these conflicts "technical contradictions."

Most engineers deal with them through compromise.

You accept a trade-off. You find a balance that's good enough.

TRIZ says there's a third path: eliminate the contradiction.

How? By studying how contradictions get resolved in practice, across every industry and scientific discipline, Altshuller found roughly 40 recurring strategies.

These are patterns observed in patents, pulled from the record of what inventors actually did when they solved hard problems.

He organized these into a contradiction matrix: a grid where you map the parameter you want to improve against the parameter that worsens, and the matrix points you to the inventive principles most likely to resolve that specific tension.

The deeper insight, and the one that shapes everything we do at Findest, is functional thinking.

TRIZ teaches you to separate the function you need from the form you're used to. Instead of asking "how do I make a better steel coating?", you ask "what am I actually trying to achieve?"

The answer might be "protect metal from oxidation," which opens a different solution space.

Coatings are one way to protect metal from oxidation. They're not the only way.

This shift, from thinking about solutions to thinking about functions, is what most R&D teams don't consider.

Because the pressure to deliver pushes them toward what they already know.

In fact, faced with any problem, most professions look to the solutions they are trained to find.

The gap between knowing and doing

TRIZ has a problem though.

Most R&D professionals we've worked with across 400 companies and two dozen industry verticals know about it.

Many can explain the contradiction matrix. Some have attended dedicated training.

When a new challenge lands on their desk on a Tuesday morning, TRIZ is the last thing that comes to mind.

Why?

For one thing, TRIZ is hard to apply by hand. You need to translate your specific problem into the abstracted parameters of the contradiction matrix, figure out which of the 40 principles apply, then translate those principles back into concrete solutions for your domain. That loop requires deep domain knowledge and TRIZ fluency, a combination that takes months or years to build.

In organizations where speed matters, that's a hard sell.

Design Thinking typically plays out in a day.

Lean offers a neat checklist that guarantees momentum if followed.

TRIZ gives you a 39-by-39 matrix and says something Apple once said: Think Different

Not to mention, whether you think it's fair or not, there's a credibility issue.

TRIZ grew within Soviet engineering circles and spread through practitioner communities rather than through peer-reviewed science.

Engineers trained in the scientific method are cautious about a framework that claims to work across all domains but spent its early decades outside controlled validation studies.

More recent research in sustainability has provided stronger evidence, with studies documenting 20-50% improvements in environmental impact.

But the credibility chasm hasn't fully closed.

And there's the organizational challenge.

Even when individual engineers become TRIZ practitioners, the methodology rarely spreads through their organization.

It sits with individuals, used by the people who trained in it, not entirely in plain sight for everyone else.

And without shared language and integrated tools, TRIZ is taken down off the book shelf.

We think all of these barriers are real.

We also think they're solvable.

That's what the last eight years have been about.

One kick, ten thousand times

There's a Bruce Lee quote that I love probably more than I should:

"I fear not the man who has practiced 10,000 kicks once, but I fear the man who has practiced one kick 10,000 times."

Findest didn't invent TRIZ or improve it theoretically. We applied it. Over and over, across 3,000 projects for 400 companies in more than two dozen industry verticals, from chemistry and biology to mechanical engineering and materials science. Enough times that the methodology became instinct for our team.

Our people are scientists themselves: chemists, biologists, engineers, even a rocket scientist. They stretch your domain expertise without replacing it. When an R&D team brings us a well-defined challenge, our first move is the funcitonal thinking move: we step back from the team's definition of the problem and look at the underlying function. What are you actually trying to achieve? Only once we've widened the frame do we narrow again, this time grounded in evidence rather than assumption.

Of course, when you have a hammer, everything looks like a nail: we're acutely aware that many projects don't need "our kick" applied, and that's the point, many projects do, and our team's instinctively know it.

That process, function-first scoping followed by structured evidence gathering, is what we've repeated three thousand times. The method didn't change. We just got very good at it.

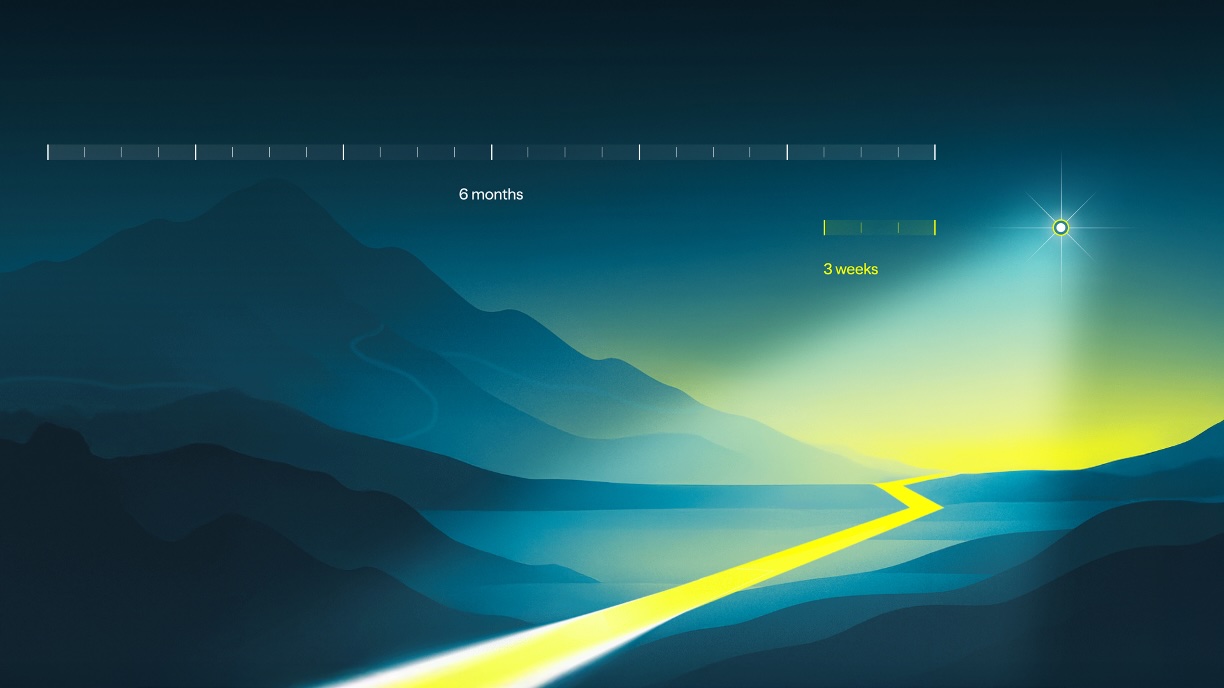

The numbers bear this out: we help organizations reduce their initial research phase from a typical 6-12 months down to a month or two.

Teams invest only 3-6 hours of input over that time.

And 85% of our customers either refer us to colleagues or come back with another challenge.

Building TRIZ into software

We started with a service. But we recognized early that the method's value shouldn't depend on having our team in the room.

The Universe is our R&D platform, and it's where the connection between an 80-year-old methodology and modern AI becomes concrete.

Most researchers don't use TRIZ daily because the manual work is too heavy.

You have to reframe a problem in functional terms, search across domains for solutions, verify whether those solutions actually address the contradiction.

Each step is sound.

Together, they're a lot to carry when you're also juggling 20 open tabs and a stack of papers you haven't read yet.

We automated that loop. When a researcher asks The Universe a question, the system doesn't search for keywords.

It searches for functions. Instead of looking for "steel coating," it looks for "protect metal from oxidation."

That's TRIZ-based functional scoping, across 250 million scholarly works from 250,000 sources.

The 30 to 50 search iterations a skilled researcher runs by hand, swapped keywords, tested boundary conditions, tracked-down references, all happen in the background.

A separate Judge Model checks every document one by one to verify it actually answers the question.

If the evidence isn't there, the system says so. It won't fill gaps with confident-sounding fabrication.

The R&D professionals we serve are trained to be skeptical of what they read.

That's the standard we have to meet.

Why this matters beyond us

Most of the researchers we work with need a structured way to do what they already do well, just without the manual burden that forces them to narrow their scope too early or skip the broad search they know they should run.

They need a method that respects their expertise.

TRIZ does that.

It gives you a systematic way to see more of the solution space before you commit.

And we've been doing that for nearly eighty years, refined by practitioners across every technical domain, grounded in the record of how problems actually get solved.

The fact that it's old is a strength.

A methodology with eight decades of empirical backing is closer to bedrock than anything else we've found.

Samsung reportedly requires TRIZ competency for career advancement.

Intel has documented over $200 million in returns from TRIZ deployment.

Boeing, GE, Siemens, and Rolls-Royce have all built TRIZ into their engineering practices.

These are companies that need methods that work at scale, reliably, for years and decades.

TRIZ meets AI

The barriers that kept TRIZ on the shelf, the complexity, the manual effort, the cognitive load, are shrinking as AI matures.

Research groups have built systems like AutoTRIZ that use large language models to automate contradiction identification and principle recommendation.

Multi-agent TRIZ systems are in early testing, where different AI agents handle different parts of the methodology.

The TRAI2025 conference in Paris-Saclay was dedicated entirely to TRIZ and artificial intelligence.

The methodology was sound.

What held it back was the cost of applying it.

AI changes that equation.

The principles still come from eighty years of patent analysis.

Applying them just got much easier.

We've been working toward this for eight years.

Every project we've completed has been an exercise in making TRIZ practical for people who don't have time to learn TRIZ.

Where this leaves us

"Us" here means something broader than our company.

It means the R&D teams we work with, the researchers reading this, and everyone whose early-stage research determines whether a project succeeds, changes or stalls months later.

Scientific literature keeps growing.

The pressure to move faster isn't letting up.

The number of adjacent domains that might hold the answer to your problem expands faster than any one person can track.

In this environment, the old habits, sticking to familiararity, cost you relevance and competitive edge.

An 80-year-old method built on the observation that solutions and insight repeat across industries and disciplines is a good place to start.

The method works.

It works when the problem is chemical and the solution turns out to be mechanical.

It works when the team is stuck because they've been looking at form when they should have been looking at function.

It works because it was derived from what actually happened in two million patents, not from what someone theorized should happen.

Eight years. Three thousand projects. One method.

We plan to keep going.

How about you?